Nvidia B300 Server Crisis: AI Infrastructure Costs Double in China

Nvidia B300 AI servers reach $1 million in China amid US chip export restrictions, creating unprecedented infrastructure challenges for AI development.

Nvidia B300 AI servers reach $1 million in China amid US chip export restrictions, creating unprecedented infrastructure challenges for AI development.

An analysis of the competition between Google's Tensor Processing Units and Nvidia's graphics processors for AI inference workloads, examining performance, economics, and market dynamics.

Cloudflare's Dynamic Workers launch in March 2026 introduces V8 isolate-based runtime that delivers 100x performance improvement over traditional containers. Learn how this technology enables AI agent sandboxes to start in milliseconds.

New neuromorphic chips using memristors and hafnium oxide could reduce AI energy consumption by 70%, addressing the growing power crisis in data centers.

MCP adoption is accelerating across enterprise and developer platforms, with 97M+ installs, enterprise ad platforms, and IDE integrations making it the de facto AI tool standard.

A practical guide to implementing robust fallback mechanisms in AI systems, covering graceful degradation, circuit breakers, human-in-the-loop patterns, and cost control strategies.

How AI compilers bridge the gap between model development and efficient hardware execution, reducing latency and costs.

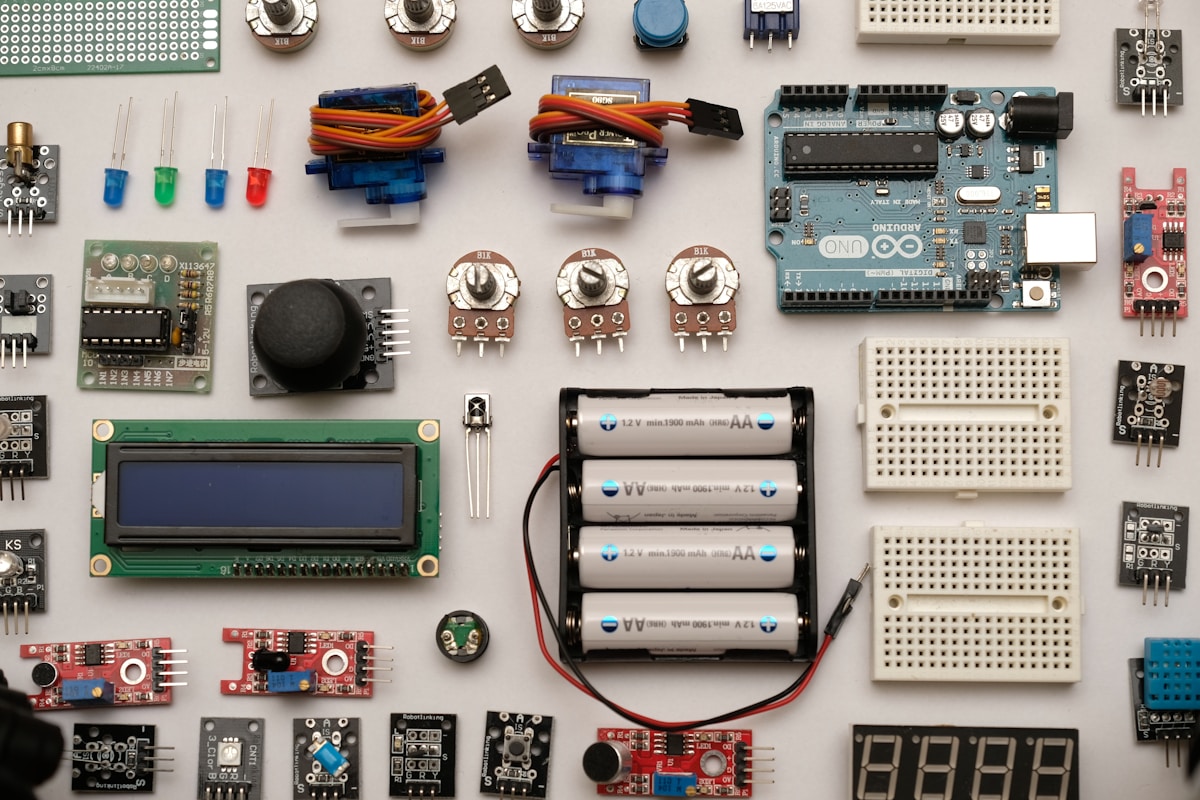

Explore how AI models are being deployed on edge devices—from smartphones to IoT sensors—enabling real-time inference without cloud connectivity.

A comprehensive guide to building production AI infrastructure, covering model serving, caching, monitoring, and scaling strategies for enterprise deployments.

Apple's testing of multiple AI smart glasses prototypes signals a major shift in wearable computing, potentially reshaping how we interact with artificial intelligence in daily life.

Jensen Huang's assertion that 'we've achieved AGI' sparks intense debate across the AI community about the definition, implications, and future of artificial general intelligence.

How quantum computing will transform artificial intelligence, from optimization problems to machine learning—and what the convergence means for the future

AI companies are constructing enormous natural gas power plants to meet the exploding energy demands of data centers, raising environmental and climate concerns.

Samsung Electronics expected to post record quarterly profit as AI boom drives unprecedented chip demand and pricing

Loughborough University researchers develop revolutionary chip using material physics that could transform AI energy consumption

NVIDIA maintains iron grip on AI accelerator market with 80% share while Blackwell architecture powers the AI factory era

How Physical AI is transforming robotics and hardware with intelligent edge sensing and ubiquitous computing

NVIDIA's Blackwell architecture is transforming AI infrastructure with 3x faster training and nearly 2x performance per dollar compared to previous generation. The GB200 NVL72 delivers 30X faster inference for trillion-parameter LLMs.

Quantum computing and artificial intelligence are converging in 2026, with major breakthroughs enabling applications once considered computationally impossible. From D-Wave's open-source toolkit to Quantinuum's generative quantum AI, the quantum-AI stack is becoming reality.

AMD's MI450 accelerator is set to launch in the second half of 2026 with a massive 6GW deal from Meta, marking a significant challenge to Nvidia's market leadership in AI computing.