Physical AI Revolution 2026: From Edge Computing to Ubiquitous Intelligence

How Physical AI is transforming robotics and hardware with intelligent edge sensing and ubiquitous computing

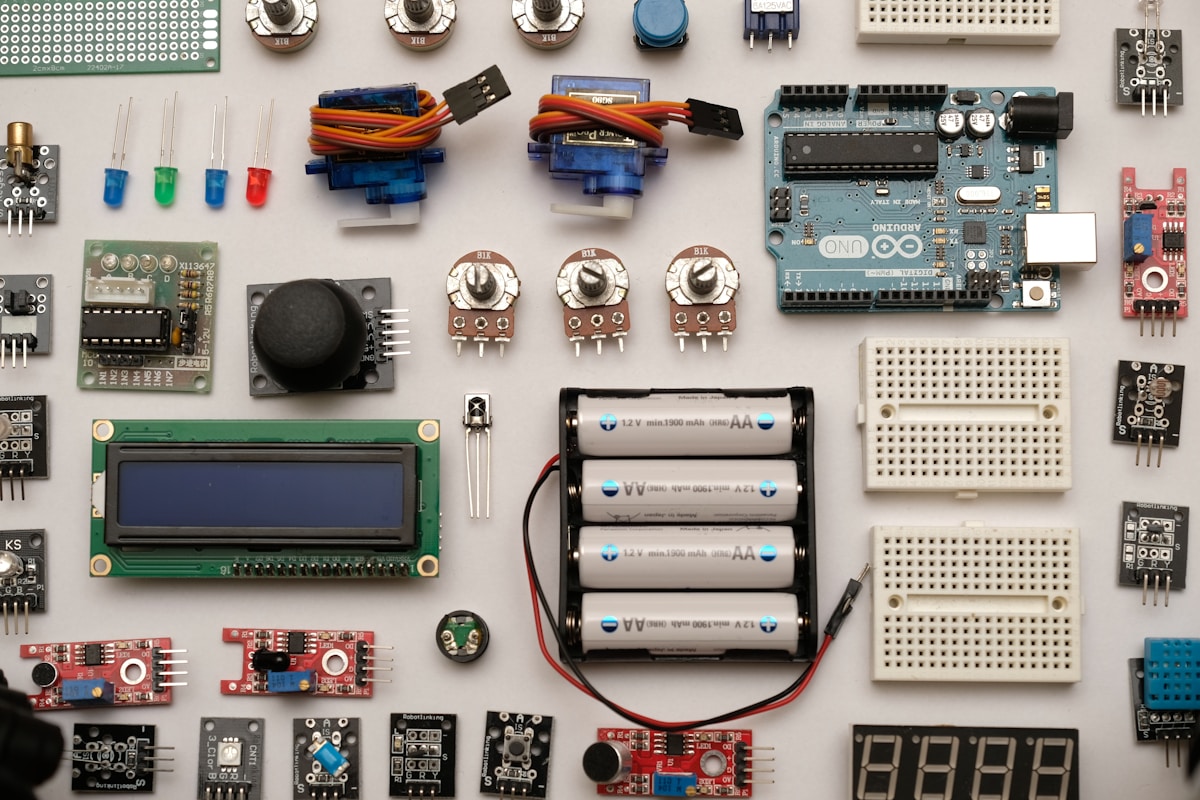

The boundaries between digital artificial intelligence and physical hardware are dissolving. In 2026, a new paradigm—Physical AI—is emerging, where intelligent algorithms operate directly on physical systems, from manufacturing robots to autonomous vehicles. This transformation, driven by advances in edge AI, sensor fusion, and compute architecture, is enabling machines to perceive, reason, and act in real-world environments with unprecedented sophistication.

Introduction

For decades, artificial intelligence operated primarily in the digital realm—processing data, generating text, and creating images. Meanwhile, physical robots operated largely on pre-programmed instructions, incapable of adapting to the fluid complexity of real-world environments. In 2026, this division is collapsing. Physical AI represents the fusion of intelligent algorithms with physical systems, creating machines that can perceive, reason, and act in real-time physical environments.

This revolution extends from manufacturing floors to household robots, from autonomous vehicles to space exploration systems. Understanding Physical AI is essential for anyone seeking to comprehend the next wave of technological transformation.

The Rise of Physical AI

Physical AI emerges from the convergence of three technological trends:

1. Edge AI Acceleration

Modern AI accelerators can now execute complex inference models locally on devices, removing the dependency on cloud connectivity. NVIDIA's latest Jetson platforms, Google's Edge TPU, and Apple's Neural Engine demonstrate that sophisticated AI can operate within the constraints of embedded systems.

2. Sensor Fusion Sophistication

Modern robots are equipped with sophisticated sensor arrays—cameras, LIDAR, radar, and tactile sensors—that provide rich environmental data. Combined with AI processing, these sensors enable real-time perception and response.

3. Real-Time Compute Architecture

Specialized processors designed for low-latency inference enable robots to make split-second decisions. This is essential for safe operation in dynamic environments.

Leaders in the Physical AI Race

Multiple companies are racing to lead the Physical AI revolution:

| Company | Focus Area | Key Development |

|---|---|---|

| Boston Dynamics | Humanoid Robotics | Atlas production model operating in Hyundai factory |

| Figure AI | General-Purpose Humanoids | Figure 02 deployed in warehouse operations |

| Amazon Robotics | Warehouse Automation | Autonomous fulfillment systems |

| NVIDIA | AI Foundation | Omniverse simulation + robotics foundation models |

| Google DeepMind | Gemini Robotics | Real-time robot control with general-purpose AI |

Boston Dynamics Atlas: A Production Humanoid

Perhaps no company represents the Physical AI breakthrough more dramatically than Boston Dynamics. In early 2026, the company unveiled the production-ready Atlas humanoid robot operating autonomously inside Hyundai's manufacturing facility in Georgia.

This represents a watershed moment: a human-scale humanoid robot functioning independently in a real industrial environment. Atlas can navigate complex terrain, manipulate objects with precision, and operate for extended periods without human intervention.

The Role of Simulation

A critical enabler of Physical AI is simulation technology. NVIDIA's Omniverse platform enables robots to train in virtual environments, learning from millions of simulated interactions before deployment in the physical world. This accelerates development cycles while reducing risk.

Robotics foundation models—trained on diverse physical tasks—provide starting points that can be fine-tuned for specific applications. This represents a shift from building robots task-by-task to deploying general-purpose systems adaptable across roles.

Applications Across Industries

Physical AI is transforming multiple sectors:

Manufacturing

Humanoid robots are entering manufacturing environments, performing tasks that require dexterity and adaptability. From assembly operations to quality inspection, Physical AI enables robots to handle unpredictable scenarios.

Logistics

Warehouse operations increasingly rely on autonomous systems for picking, sorting, and transporting goods. Physical AI enables these systems to adapt to variable conditions.

Healthcare

Surgical robots enhanced with AI can assist surgeons with enhanced precision and adaptability. Rehabilitation systems use Physical AI to provide personalized therapy.

Domestic Robots

The promise of general-purpose home robots is approaching reality. Physical AI enables domestic robots to navigate homes, assist with chores, and respond to human needs.

Challenges and Considerations

Despite remarkable progress, Physical AI faces significant challenges:

- Safety: Physical robots operating near humans require foolproof safety systems

- Reliability: Real-world environments present edge cases that simulation cannot fully capture

- Cost: Advanced robots remain expensive, limiting deployment

- Regulation: Liability frameworks for autonomous physical systems remain underdeveloped

The Path Forward

The Physical AI revolution is accelerating. By 2028, industry analysts project that physical AI systems will represent a $150 billion market. The convergence of capable hardware, sophisticated AI, and economic incentives drives continued investment.

The question is no longer whether Physical AI will transform industries—but how quickly and completely.

Conclusion

Physical AI represents a fundamental evolution in how intelligent systems interact with the world. Moving beyond the screen and into physical space, AI is becoming tangibly present in our environments—from factories to homes, from hospitals to highways. The robots are no longer just coming; they're already here, operating alongside us, transforming how we work, live, and interact with machines.

Related Articles

AMD MI450 Accelerator: The Chip Challenging Nvidia's AI Dominance

AMD's MI450 accelerator is set to launch in the second half of 2026 with a massive 6GW deal from Meta, marking a significant challenge to Nvidia's market leadership in AI computing.

AI Chips: Arm's $15 Billion Bet: The AGI Chip That Could Reshape Data Centers

Arm announces a new AGI-focused CPU targeting $15 billion in annual revenue by 2031, with the CPU total addressable market projected to reach $100 billion.

Brain-Inspired AI Chips: 2000x Energy Efficiency Breakthrough

Loughborough University researchers develop revolutionary chip using material physics that could transform AI energy consumption