[Google TurboQuant]: How Google's 6x Memory Compression Algorithm is Reshaping AI Infrastructure

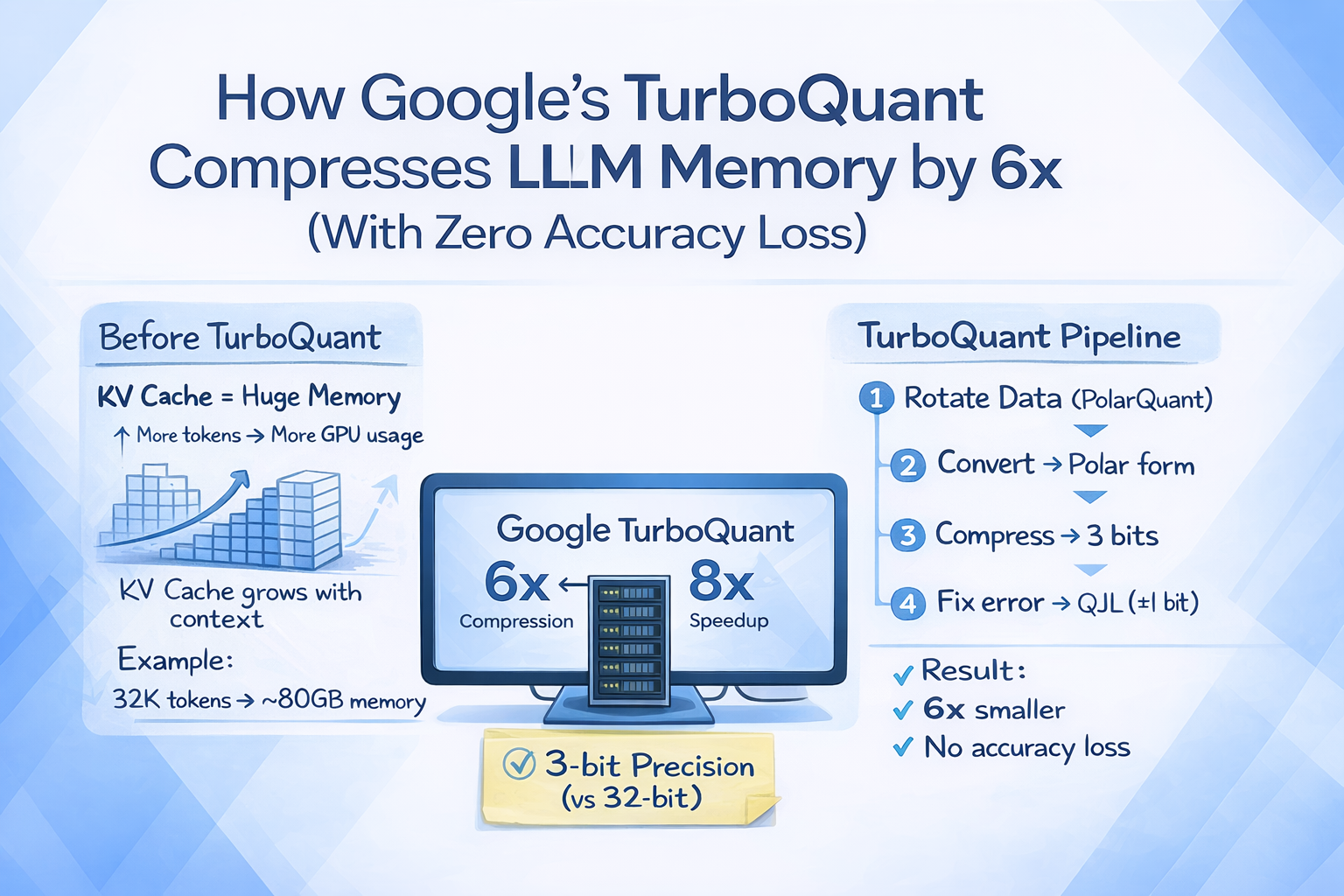

Google Research unveils TurboQuant, a revolutionary lossless AI memory compression algorithm that reduces LLM memory usage by up to 6x without sacrificing quality—potentially transforming how enterprises deploy AI at scale.

![[Google TurboQuant]: How Google's 6x Memory Compression Algorithm is Reshaping AI Infrastructure - Complete AI Infrastructure guide and tutorial](/blog-images/1774830735140-n6yxe4.webp)

The artificial intelligence industry has long grappled with a fundamental tension: larger models demand exponentially more memory, creating massive infrastructure costs that limit who can actually deploy cutting-edge AI. Google Research's March 2026 unveiling of TurboQuant—a lossless memory compression algorithm specifically designed for large language models—promises to flip this equation entirely. By achieving up to 6x memory reduction without any loss in output quality, TurboQuant represents what experts are calling the most significant optimization breakthrough in AI infrastructure since the invention of the transformer architecture itself.

Introduction

In the high-stakes world of AI infrastructure, memory is the bottleneck that defines what's possible. Every large language model requires storing billions of parameters in fast memory (RAM) during inference, and even the most well-funded enterprises find themselves constrained by the sheer cost of keeping these models running. The industry has tried quantization, pruning, and knowledge distillation—but each approach has come with significant trade-offs, typically involving some degradation in model quality.

Enter TurboQuant, unveiled by Google Research ahead of the ICLR 2026 conference. This new algorithm specifically targets the key-value (KV) cache—the memory structure that stores contextual information during language model inference—and applies advanced vector quantization techniques to shrink memory requirements by a factor of six without any measurable loss in downstream performance.

The Technical Breakthrough

Understanding the KV Cache Problem

Modern language models don't just process words one at a time—they maintain a running understanding of everything that's been said in the conversation through what's called the KV cache. This cache stores representations of each token (word or subword) that the model has processed, allowing it to maintain context across long conversations and documents.

The problem is that even a relatively small model's KV cache can consume tens of gigabytes of memory. For models with million-token context windows, the memory requirements become prohibitive—costing thousands of dollars daily in cloud computing fees.

How TurboQuant Works

Google Research's TurboQuant employs two complementary techniques:

PolarQuant: A novel vector quantization method that creates more efficient representations of the high-dimensional vectors in the KV cache by mapping them to a carefully designed codebook while preserving essential similarity relationships.

QJL (Quantized JWT-Like) Training: An optimization approach that trains the model to work with compressed representations from the beginning, rather than compressing after training.

Early results show that TurboQuant delivers "8x performance increase" in some tests while reducing memory usage by 6x—without any degradation in output quality across all benchmarks.

Industry Implications

The Cost Revolution

For enterprises currently spending millions on AI inference infrastructure, TurboQuant represents immediate savings. If deployment costs can be reduced by 5-6x, what was once prohibitively expensive becomes accessible. A startup that couldn't afford to run a 70-billion-parameter model might suddenly be able to deploy a version that was previously beyond their reach.

democratizing Access

Perhaps more importantly, TurboQuant could dramatically expand who can actually deploy frontier AI models. Academic researchers, non-profits, and smaller companies have all been priced out of the race for cutting-edge AI. With 6x memory compression, the compute requirements drop to levels that more organizations can afford.

The Hardware Angle

While TurboQuant is purely a software solution, its implications extend to hardware. If models can run on 1/6th the memory, existing hardware becomes 6x more valuable. Some analysts are already speculating that this could reduce demand for the newest, most expensive AI accelerators—at least in the short term.

What's Next

Google Research has indicated that the full technical details will be published at ICLR 2026, and the company is working to make TurboQuant available as part of their JAX and Linguistics frameworks. Competitive pressure will likely push similar breakthroughs from Microsoft, Amazon, and the AI startup ecosystem.

The internet, predictably, has already nicknamed the breakthrough "Pied Piper" (after the fictional compression algorithm from HBO's Silicon Valley)—though Google might prefer the comparison to go away.

Conclusion

TurboQuant represents a pivotal moment in AI infrastructure. After years of one-sided focus on making models larger, the industry is finally paying equal attention to making deployment more efficient. The 6x memory reduction achieved by Google Research doesn't just save money—it fundamentally changes what's economically viable. And in the competitive landscape of AI, that could matter more than any benchmark score.

The compression wars have only just begun.

Related Articles

![[AI Chips]: Arm's $15 Billion Bet: The AGI Chip That Could Reshape Data Centers - Related AI Infrastructure tutorial](/blog-images/1774572004655-7ahdec.jpeg)

[AI Chips]: Arm's $15 Billion Bet: The AGI Chip That Could Reshape Data Centers

Arm announces a new AGI-focused CPU targeting $15 billion in annual revenue by 2031, with the CPU total addressable market projected to reach $100 billion.

Google TurboQuant Redefines LLM Memory Efficiency with 8x Speed Boost

Google's new TurboQuant algorithm achieves 6x memory reduction and 8x performance increase in AI inference, sparking 'Pied Piper' comparisons across the tech industry.

![[Neuromorphic Computing]: How Brain-Inspired Chips Are Challenging the AI Hardware Status Quo - Related AI Infrastructure tutorial](/blog-images/1774830984885-6aljuz.webp)

[Neuromorphic Computing]: How Brain-Inspired Chips Are Challenging the AI Hardware Status Quo

Neuromorphic computers modeled after the human brain can now solve complex physics equations—something previously possible only with energy-hungry supercomputers. This breakthrough could fundamentally reshape AI hardware economics.